The impact of an AWS S3 Bucket Takeover can range from none, account takeover, and even up to RCE. In this article, we’ll tell you how to find it and maximize its impact.

What is AWS S3 Bucket Takeover?

If you are familiar with the base attack, feel free to jump to the next sections.

AWS S3 Bucket Takeover refers to a security vulnerability that occurs when an Amazon Web Services (AWS) Simple Storage Service (S3) bucket is misconfigured, allowing unauthorized users to take control of the bucket. S3 is a widely used cloud storage service provided by AWS, and it hosts files and data for countless applications and websites.

The takeover typically happens in two scenarios:

- Unclaimed S3 Buckets: If a bucket name is referenced in an application or a DNS record but the bucket itself is not created or is deleted, an attacker can create a bucket with the same name and potentially intercept data intended for the original bucket.

- Incorrect Permissions: Misconfigured bucket permissions may allow unauthorized users to list, read, write, or delete the contents of a bucket.

When do AWS S3 Bucket Takeovers occur?

Taking over someone else’s S3 bucket sounds like something that AWS shouldn’t allow. And it doesn’t. When a website stores its files ons3.amazonaws.com, you’d need a 0-day in AWS to claim this bucket.

However, many websites don’t want to use Amazon’s domain but prefer their pretty ones like files.target.com. The setup is quite easy. They need 2 things:

- An S3 bucket, let’s say its name is

targetbucket. - A DNS record linking their website with the bucket.

This DNS record can look like this.

files.target.com. 3600 IN CNAME targetbucket.s3.amazonaws.com.So, where’s the problem? AWS S3 Bucket Takeover occurs when a company changes or removes a bucket and forgets to remove the DNS record that points to it.

How to detect AWS S3 Bucket Takeover?

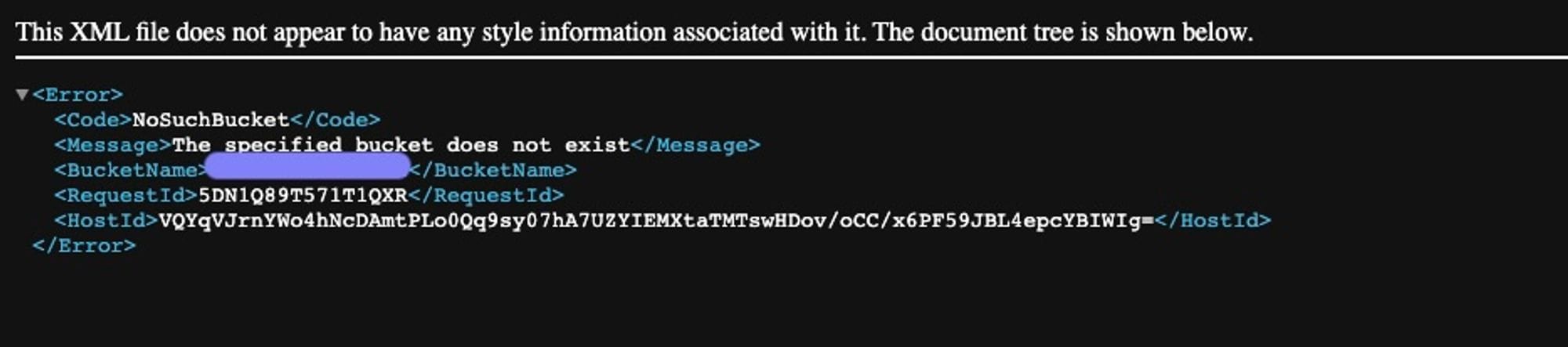

You can simply make a GET HTTP request to the base path ("/") and see if there is The specified bucket does not exist an error. You should see something like this:

If you see this message, the takeover is possible! If you are to take away one fact from this article, make it this one, the rest you can Google later.

To confirm and check the bucket’s name, run the dig CNAME files.target.com command to check the DNS record. After that, you know the dangling domain and the bucket name which is all the information you need to do the takeover.

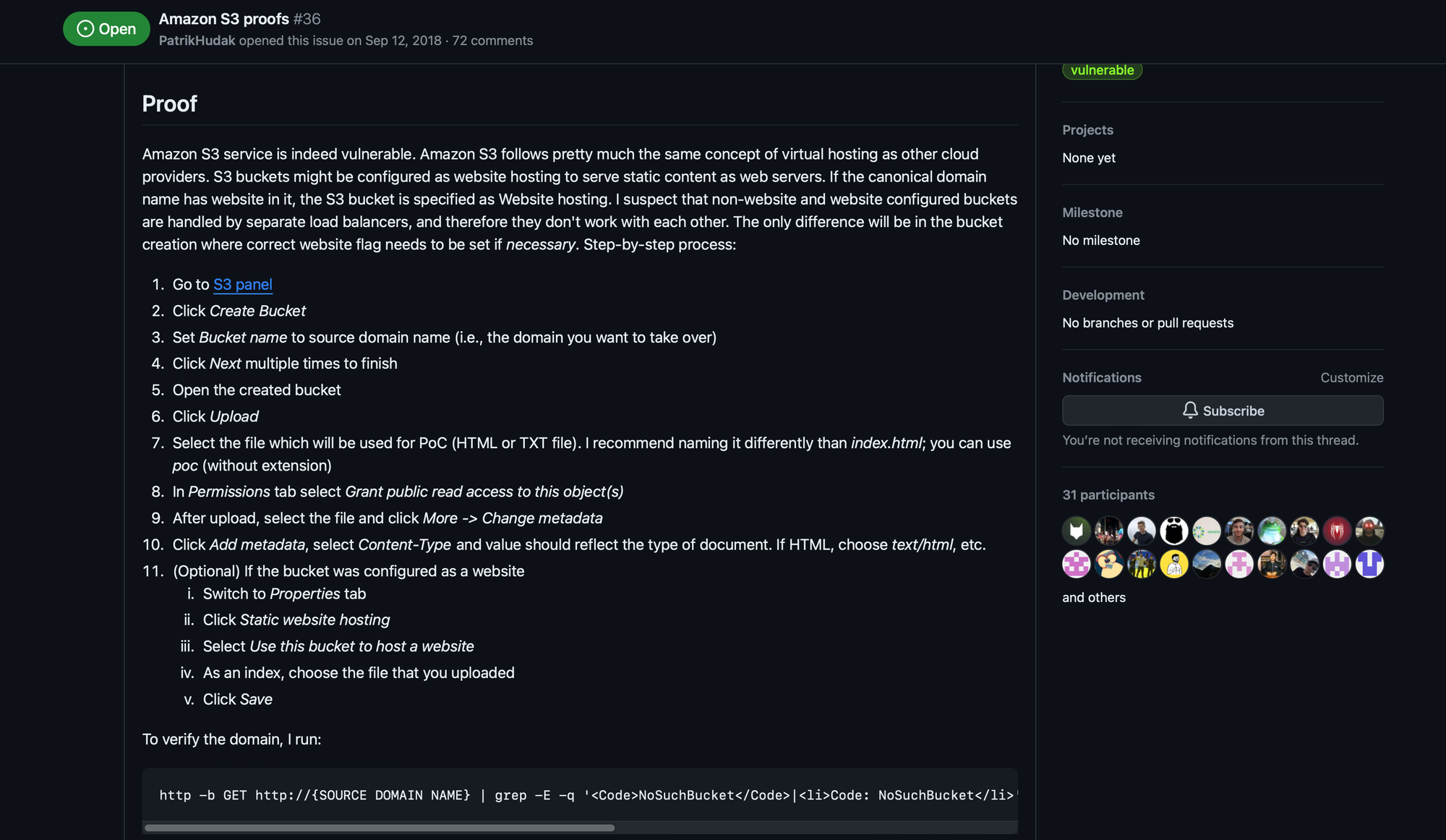

To do that, go to the AWS S3 console and create the bucket with that name. The exact steps on how to do that are listed in the excellent can-i-take-over-xyz repository. It’s also one of the best places to write about your problems when you think the bucket is takeoveable but you can’t make it work.

How to escalate AWS S3 Bucket Takeover?

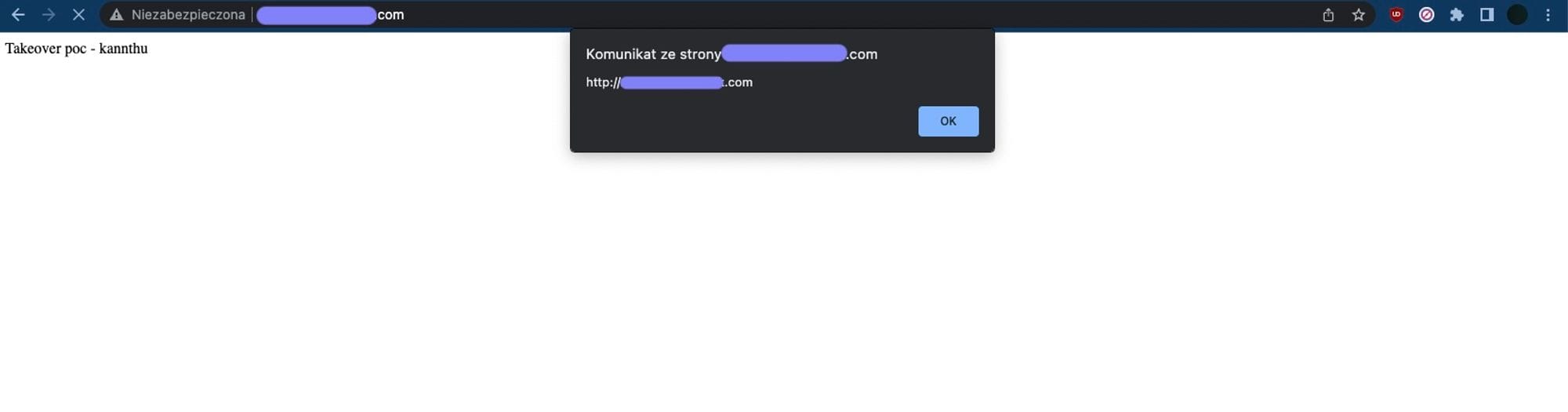

S3 Bucket Takeover allows you to host any file, including an XSS payload under the company’s domain, so... we should go straight to the report submission?

No, you should try to escalate it! First, assess the impact.

If you have taken over the bucket, the company already shouldn’t have been using it. But shouldn’t and doesn’t are not the same. In a big organization, nothing is as easy as announcing the storage migration on Monday to have everyone change their code by Friday.

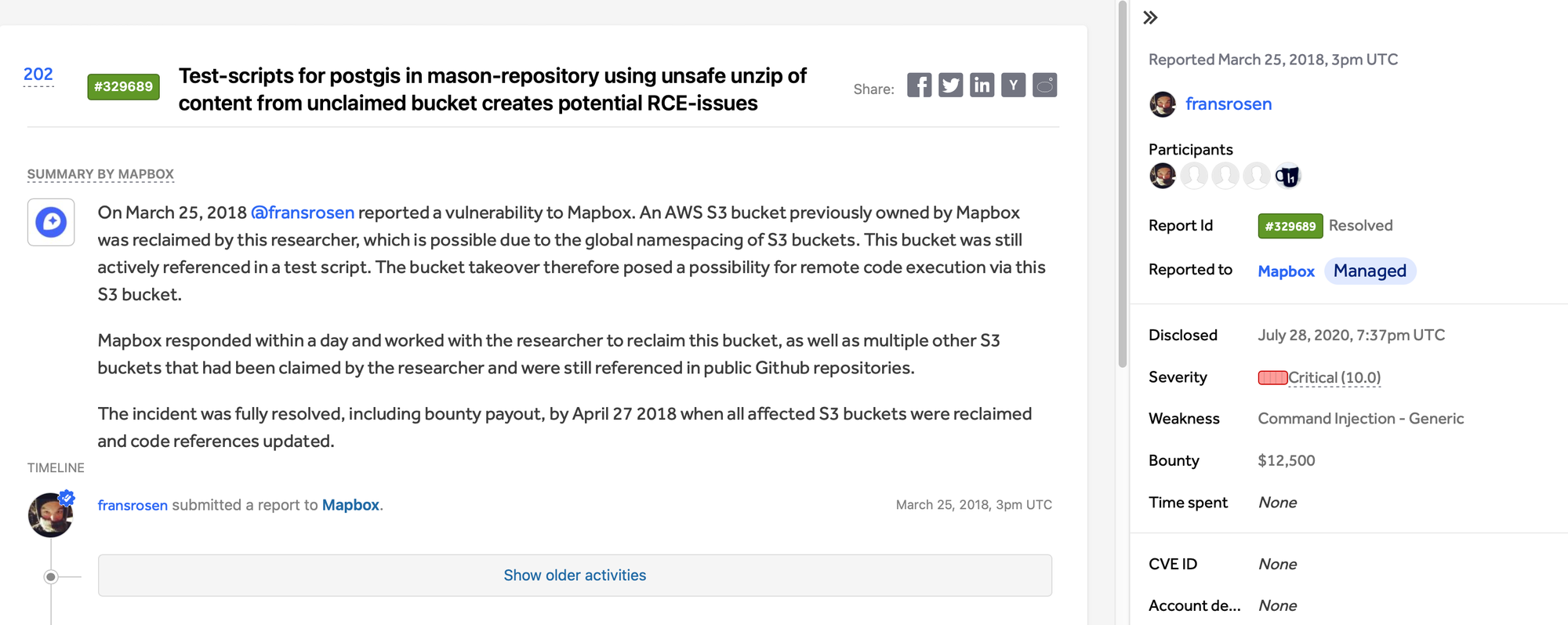

For example, Mapbox forgot that their script still pulls a file from an unused S3 bucket.

It could lead to an RCE via ZipSlip so Frans Rosen took over the S3 bucket and they rewarded him $12,500.

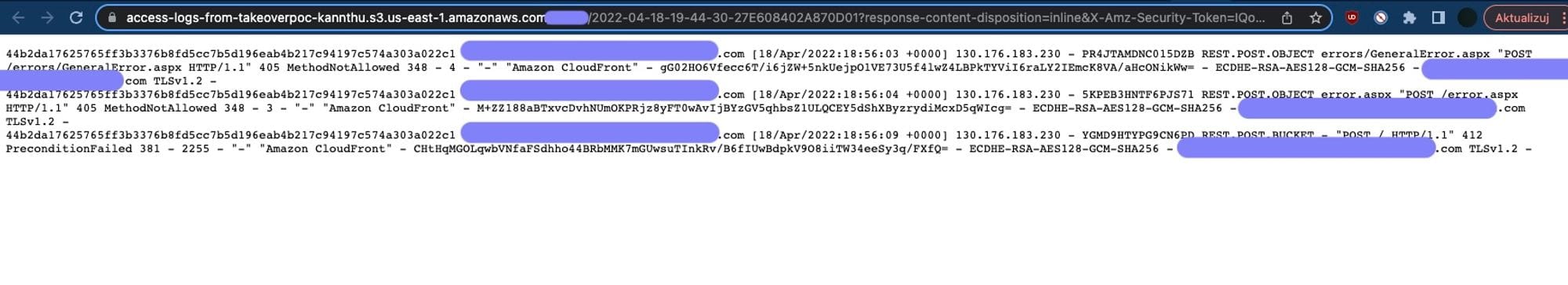

Logging requests to the bucket

How can you know about an internal script trying to pull a file from your bucket? You can turn on logging in AWS S3 console. It allows you to see all files that are being requested. Here’s the link to the docs explaining what’s being logged.

If you notice a path like targettestscript.sh or targettestfile.html, try hosting real files under those locations with payloads that will send you blind RCE/blind XSS callbacks.

While very profitable, it’s not the most common scenario. Usually, an AWS Bucket Takeover gives you an XSS. But read carefully because the impact of this XSS can be none.

Escalation of XSS on a subdomain

If your XSS is on a subdomain of your main target likefiles.target.com, you have one leg through the door. If you are lucky, the cookies are scoped to .target.com. Then, you can pretty much exploit it as any other XSS.

If not, you can set your cookies for .target.com and mess up with the application this way or, because you gained the ability to send a SameSite request, you can bypass the CSRF protection.

There’s a whole variety of things you can and can’t do depending on the concrete setup. We won’t dive into each of them because we’d need to cover pretty much the whole client-side security.

But unfortunately, your XSS will sometimes be on a completely different domain, eg. targetusercontent.com. With no cookies and no authentication. Then, unless you can chain it, your XSS will be harmless.

While we’ve seen companies reward those anyway, you will probably end up with an Informational. The risk of a bug doesn’t depend on the bug class or the fact that you popped an alert. Your payout depends on the real threat to the company.

Other cases of AWS S3 Bucket Takeover

The detection of the basic S3 takeover is very easy to automate. It can be an advantage if you are relying on large-scale hunting or a downside if you are hacking manually. Without any automation, your odds of finding this simplest variation of the bug are quite slim.

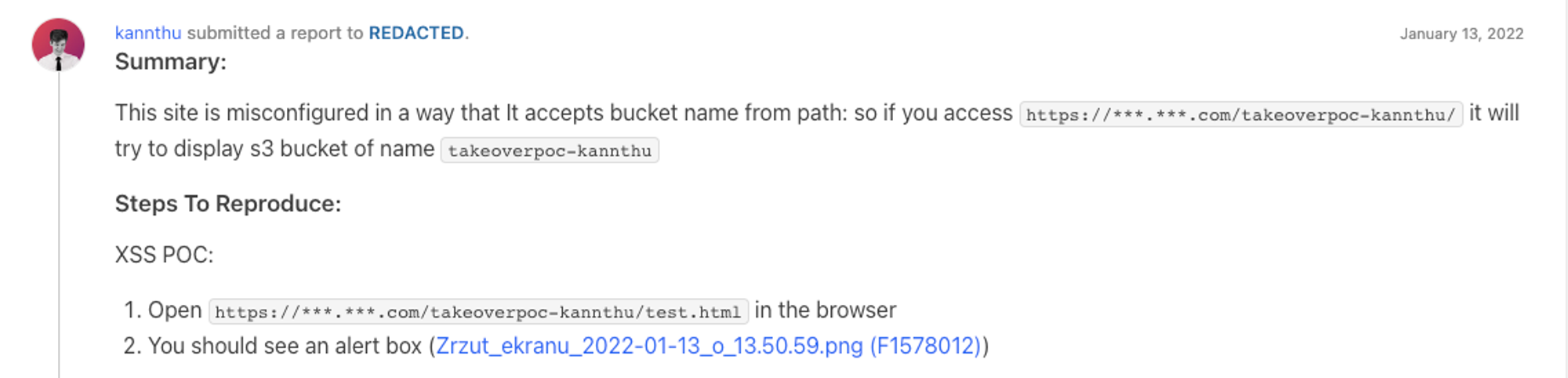

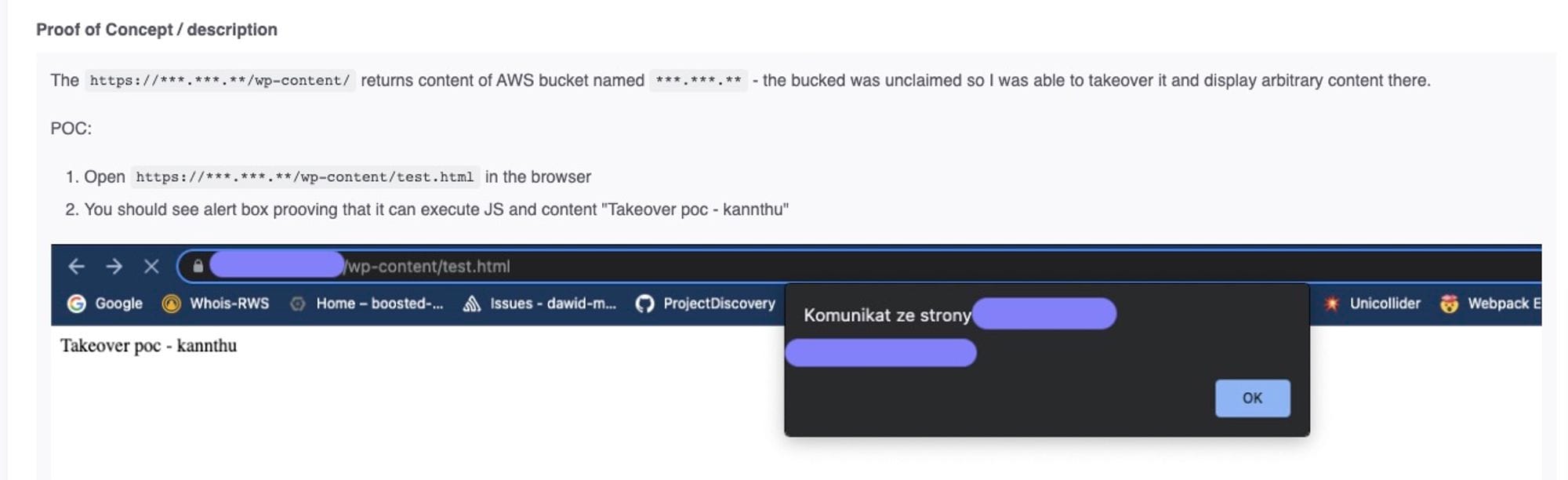

However, it’s not the only variation. Some companies, instead of linking the domain using DNS records, need more control so they write custom code to tunnel their requests to different S3 buckets.

For example, here we’ve seen a variation where the server would treat the first part of the path as a bucket name.

It allows hosting content from a company’s different S3 buckets under one domain. Or, to be precise, content from any bucket, including the XSS payload from our S3. It makes the attack even easier because you don’t even have to create a new bucket.

Moreover, the S3 bucket doesn’t have to be served under the webroot. It can be any path which is also something that an automation that only scans / would miss.

It only proves there are many variations of the S3 Bucket Takeover so you mustn’t be limited in only detecting it by looking at the DNS record.

How to automate the detection of AWS S3 Bucket Takeover?

VIDOC Automated Module Scan is a feature that allows you to schedule your Module Scan to run automatically on a single target or targets from Recon. You will get a notification when your scan finds something interesting.

- Create account

- Go to “Scanning” -> “Automation” and click “Create new automated scan”

- Provide a name for your Automated Scan

- Search for "AWS takeover" and select modules

- Select a target or targets from Recon or provide URL to a target

- Done! Your Automated Scan will run on a regular basis:)

Summary

Tu sum up, The most important takeaway to find S3 takeovers should be to never miss the The specified bucket does not exist error. When you do, create a bucket with that name, set up logging, and either spoof a file that someone requests or exploit the S3 takeover as a regular XSS.

Useful links

- Vidoc Security Lab | www.vidocsecurity.com

Follow us

Check out our other social media platforms to stay connected:

- LinkedIn | www.linkedin.com/company/vidoc-security-lab

- X (formerly Twitter) | twitter.com/vidocsecurity

- YouTube | www.youtube.com/@vidocsecuritylab

- Facebook | www.facebook.com/vidocsec

- Instagram | www.instagram.com/vidocsecurity